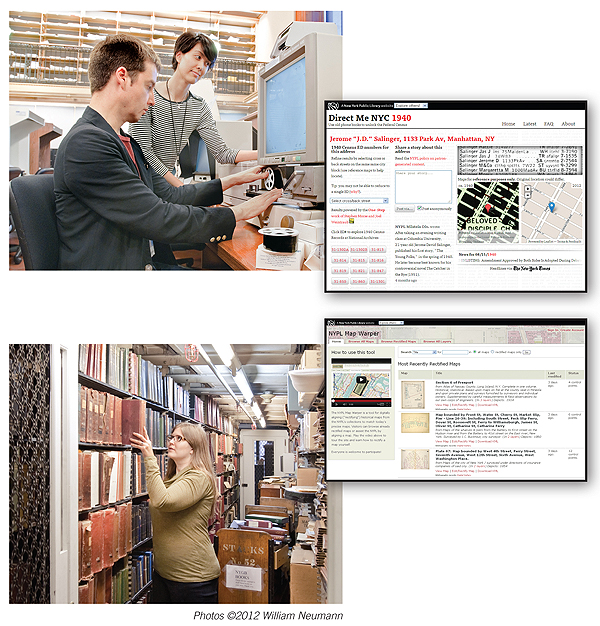

Ben Vershbow, manager, NYPL Labs (l.) and Matt Knutzen, NYPL geospatial librarian compare old maps

to their Warper-rectified counterparts.

The home base for the New York Public Library (NYPL) Labs is a strange mix of old and new. A bunch of modern cubicles hover incongruously amid the stately marble walls of what used to be a courtyard in the venerable Schwarzman Building, before the need for more space convinced the library to press it into service. It’s not a bad metaphor for what the labs do: turn the library’s substantial historical holdings into something new, useful, and a little bit quirky.

Thus far, the labs has spearheaded four projects, all of them aimed at not only digitizing physical collections but at turning their digital versions into data that can be sliced and diced with all of today’s tools. Ben Vershbow, manager of NYPL Labs, sees the first stage of his mission as “extending the machine-readable data so it can be recontextualized—the library as data clearinghouse.” As a vision, it adheres more strictly to the library’s traditional role of information collector and provider than many of today’s library reinventions—library as community center, for example. At the same time, it removes the “book warehouse” or even “digital book virtual warehouse” connotations by giving the library a front and center role in parsing the data into meaningful categories that make it usable.

The second stage can also be seen as applying a fairly old-school function of librarians—curation—to a brand new context. Vershbow argues for “curating interesting pathways through the data—the library as a big set of APIs [application programming interfaces]—for educators, artists, etc.”

Crowd control

All of the projects of NYPL Labs also incorporate a crowd-sourcing component, tapping into the expertise and enthusiasm of the library’s user base. This adds value in several ways. Most fundamentally, it allows volunteer labor to supplement the limited funding and staffing that even NYPL can afford to devote to cool-but-not-core projects. “Staffing is the biggest cost,” says Vershbow.

The labs’ staff of five (Vershbow, three product developers, and a product manager) may seem like a lot to a small suburban library, but it’s certainly not enough to transcribe, for example, 40,000 restaurant menus themselves. It also means much greater public buy-in than the library sees for projects in which the public is only a passive observer. Labs initiatives see “a lot more use, and sustained use, than static exhibits we’ve put up in the past,” Vershbow says.

More subtly, “a million heads on the Internet are better than one,” says Vershbow. Users influence development by telling the library what features they need to do their own work. “If institutions pay attention to how their materials are repurposed, they can get ideas for new services,” Vershbow says. Often, patrons take the library’s resources in directions the library never anticipated. “Our public starts to curate them, remix them, mash them up, create derivative works,” he says.

To librarians accustomed to relying on professional credentials, letting just anyone play can be a struggle. But at least at NYPL, worries about mischief have proven unfounded. “We’ve never had vandalism,” says Vershbow, even for projects where contributors can be anonymous. “People have treated it with great respect.” Not just the labs but the library as a whole is moving toward a greater role for patron contributions: the library recently introduced ratings and reviews features in its regular catalog. Also, Vershbow hopes to take citizen creation even further, with more in-site facilities for media creation, perhaps including 3-D printing.

DIGITIZE ME Philip Sutton and Sachiko Clayton (above) demonstrate microfilm reader predecessors of Direct Me.

Carmen Nigro (below) of the Milstein Division pulls an as-yet-undigitized directory

Food for thought

The labs team makes sure to design its projects in collaboration with the subject specialist librarians, not in isolation. For example, Rebecca Federman, culinary collections librarian, and Michael Inman, curator of rare books, act as coleads of the Menus Project. Yet when it launched over a year ago, “it was kind of an empty vessel,” Vershbow says. The labs team simply digitized menus from the library’s collection of more than 40,000 and called on users to help transcribe them. Interns check their work and make any corrections. Because the task is simple, no login is required to contribute, though the labs is considering implementing a tiered system because contributors are asking for it.

As of August 1, the project has transcribed its one millionth dish (Guinness Stout, a bargain at 25¢), as well as 14,000 menus, and is still going strong. So while the labs team made sure “to keep the transcription call to action very front and center,” in the redesign launched June 22 (to coincide with the Lunch Hour exhibit), Vershbow also wants to start providing ways to explore the data already generated, including by location. Users can already search for a dish from the collection on Menupages.

The labs released an API for the Menus Project on July 19, the library’s first publicly promoted and documented API, and plans to partner with other libraries with digitized menu collections. “We are in preliminary discussions with a lot of these organizations; we are discussing how we would confederate a little; maybe share the data and increase the overall data set,” says Vershbow. Eventually, he hopes to “build a historical Yelp,” which would contain information on theatrical and musical shows and other activities as well as food.

Charting a new course

Matt Knutzen, NYPL geospatial librarian, is in charge of the Map Warper, an ambitious attempt to connect all of the libraries’ many maps of New York City into a single, giant linked data set so that users can trace all the data the library possesses on a single location through time, or “stacks of time,” as Knutzen says. That’s a lot of data: for example, the library has all the names of all the old Coney Island rides, locations of businesses by year, and so on.

Essentially, the Map Warper relies on users to line up several points on an old map with the same points on a new, accurate one, such as the corners of Central Park. Where possible, the labs uses open source software: the maps project is built on Open Street Map, though the results can also be viewed in Google Earth. The library’s additions are themselves open source: other libraries can install this for their own map collections.

Because Map Warper is not as simple as menu transcription, users must take a class before they can join in on the fun: the library hosts “citizen cartographer” workshops every few weeks. A statistical reading checks up on how accurately the map has been warped, so the results can’t be compromised by accident (or on purpose).

The library also recently got a grant to build a digital gazetteer (a database of historical locations and place names in New York City). Called the New York Chronology of Place, it is directly connected to the Map Warper: the data gained from the Map Warper will populate it, as well as existing sources.

BETTER THAN SPIDER-MAN NYPL Labs staff (l.–r.) Mauricio Giraldo, Zeeshan Lakhani, Vershbow, and David Riordan

test the Stereogranimator (inset) with NYPL 3-D glasses in the labs offices, set against the backdrop

of the stately Schwarzman Building

Seeing the past in 3-D

The Stereogranimator is unique among the labs’ projects in that not only does it welcome user input, but it was inspired by a user in the first place. Artist Joshua Heineman originally noticed by accident, while downloading images to his laptop, that flickering digital images mimicked the effect of a 19th-century stereograph; he then set out to re-create it. (Users in the 1800s viewed pairs of nearly identical images in a stereoscope to create a 3-D effect.) The Stereogranimator, launched in January, builds on Heineman’s technique to let users produce animated GIFs from NYPL’s collection of more than 40,000 stereographs.

The process depends on more than just NYPL’s holdings: the labs has set up the process through the Flickr API, so that other libraries, starting with Boston Public Library (BPL), can easily participate. “They had actually contemplated a similar tool with their stereograph, so when they saw us, they were like, ‘Oh my God, can we play, too?’ And we like to play with others,” Vershbow says.

BPL has already had some of its holdings animated, and Vershbow hopes to bring in other libraries soon. “I am doing some outreach to the Flickr commons community,” he said. “Now that we’ve done it for Boston, to do it for others would be quite simple. Flickr provides, for images, the kind of cross-institutional playground that I would love to see more broadly.”

Counting on the census

NYPL Labs’ latest and most ambitious undertaking is Direct Me NYC 1940. Inspired by the recent release of the 1940 census data, Maira Liriano, manager of the library’s Milstein Division, explained to the labs team what kind of things they would be helping users to do and ultimately became the curatorial lead of a project to do it better.

Because the census data was not indexed on its April 2 release date, users trying to find a specific person would first be directed to the microfilm room with a call number for a phone book to find an address, then to a series of websites called OneStep. Developed by Stephen Morse and with volunteer contribution, the websites coordinate streets with specific census enumeration districts.

Direct Me streamlined this workflow by digitizing the New York City phone books of the period. Then the labs built a data entry form in a program called Document Viewer, so that once users find an address, they can query it against Morse’s data set and see results on the fly, then narrow the results further by selecting cross streets. Says Vershbow, “You usually can get it down to a small handful” of census enumeration districts, each about half of a city block.

Users can also post an annotation to the address and see it on two maps, a geological survey map from 1940 and the contemporary Open Street Map. “I think the directories and the maps are a kind of convergence course,” says Vershbow, but, in fact, at NYPL Labs, almost everything seems to connect back to maps: the team wants to connect the Menus Project to maps as well.

Some playful features are included, too: the little excerpt of the phone book the user has “ripped out” shows up surrounding the address, along with headlines from the New York Times on the same day in 1940 as the date the user is visiting the website (a collaboration with the Times).

Document Viewer is also open source, produced by Document Cloud, a publicly funded project for viewing open source documents. The Direct Me project does double duty as a test run for using the program, which Vershbow hopes the library may work with in future to serve archives and other content beyond the labs.

While the value of Direct Me NYC 1940 has lessened now that Ancestry.com has finished indexing the census, people are still using it, and it remains a free way in, as well as a test case for the value of directory data. Librarians from the NYPL’s Millstein division and around the library have been using Direct Me to find notable figures, including Thurgood Marshall and Billie Holiday. “That is sort of a playful feature, but what it does suggest very powerfully is that these very ephemeral things that were considered throwaways contain massive amounts of data. You can do cultural history on the street level. We have that kind of New York City ghost bank, all the people who have lived here and all their stories,” says Vershbow. He wants to digitize other directories (NYPL has them going back as far as the 1800s) and create a time line of the results.

Digging into digital

While not every library has the luxury of devoting full-time staffers to creating projects like those the labs undertakes, most libraries can take advantage of one or more of these takeaways: reaching out to and trusting their patron base to participate in cocreation; mining their existing strong collections for new projects; using open source tools, including those created by larger libraries, to do more for less; and looking at the ways patrons use resources to see what tools they need the most.

While the value of Direct Me NYC 1940 has lessened now that Ancestry.com has finished indexing the census, people are still using it, and it remains a free way in, as well as a test case for the value of directory data. Librarians from the NYPL’s Millstein division and around the library have been using Direct Me to find notable figures, including Thurgood Marshall and Billie Holiday.